The Grow to be Era Summits get started October 13th with Low-Code/No Code: Enabling Undertaking Agility. Sign up now!

In partnership with Paperspace

One of the vital key demanding situations of system studying is the will for massive quantities of information. Accumulating coaching datasets for system studying fashions poses privateness, safety, and processing dangers that organizations would quite keep away from.

One methodology that may lend a hand cope with a few of these demanding situations is “federated studying.” By means of distributing the educational of fashions throughout person units, federated studying makes it conceivable to make the most of system studying whilst minimizing the want to acquire person information.

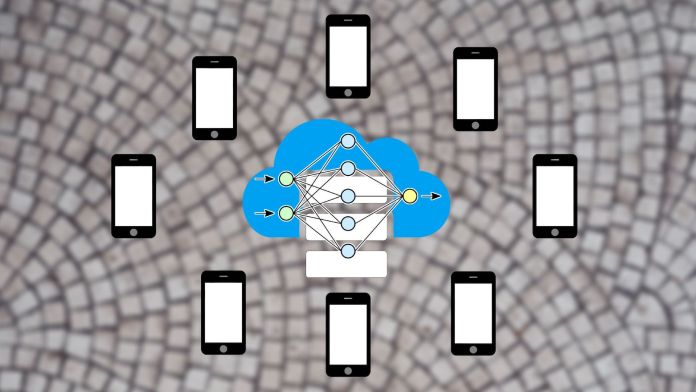

Cloud-based system studying

The standard procedure for creating system studying programs is to assemble a big dataset, educate a type at the information, and run the educated type on a cloud server that customers can achieve thru other programs equivalent to internet seek, translation, textual content era, and symbol processing.

Each time the applying needs to make use of the system studying type, it has to ship the person’s information to the server the place the type is living.

In lots of instances, sending information to the server is inevitable. As an example, this paradigm is inevitable for content material advice techniques as a result of a part of the knowledge and content material wanted for system studying inference is living at the cloud server.

However in programs equivalent to textual content autocompletion or facial reputation, the knowledge is native to the person and the machine. In those instances, it will be preferable for the knowledge to stick at the person’s machine as a substitute of being despatched to the cloud.

Thankfully, advances in edge AI have made it conceivable to keep away from sending touchy person information to utility servers. Often referred to as TinyML, that is an energetic house of analysis and tries to create system studying fashions that have compatibility on smartphones and different person units. Those fashions make it conceivable to accomplish on-device inference. Massive tech corporations are looking to carry a few of their system studying programs to customers’ units to give a boost to privateness.

On-device system studying has a number of added advantages. Those programs can proceed to paintings even if the machine isn’t hooked up to the web. Additionally they supply the good thing about saving bandwidth when customers are on metered connections. And in lots of programs, on-device inference is extra energy-efficient than sending information to the cloud.

Coaching on-device system studying fashions

On-device inference is a very powerful privateness improve for system studying programs. However one problem stays: Builders nonetheless want information to coach the fashions they’re going to push on customers’ units. This doesn’t pose an issue when the group creating the fashions already owns the knowledge (e.g., a financial institution owns its transactions) or the knowledge is public wisdom (e.g., Wikipedia or information articles).

But when an organization needs to coach system studying fashions that contain confidential person knowledge equivalent to emails, chat logs, or private pictures, then gathering coaching information involves many demanding situations. The corporate must be certain its assortment and garage coverage is conformant with the quite a lot of information coverage rules and is anonymized to take away in my opinion identifiable knowledge (PII).

As soon as the system studying type is educated, the developer staff should make choices on whether or not it is going to maintain or discard the educational information. They are going to additionally must have a coverage and process to proceed gathering information from customers to retrain and replace their fashions often.

That is the issue federated studying addresses.

Federated studying

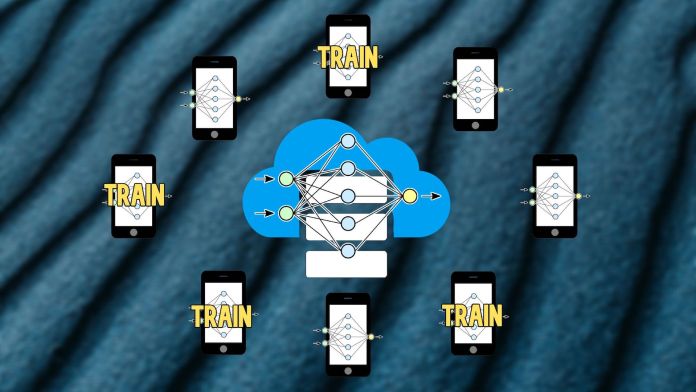

The primary concept at the back of federated studying is to coach a system studying type on person information with out the want to switch that information to cloud servers.

Federated studying begins with a base system studying type within the cloud server. This type is both educated on public information (e.g., Wikipedia articles or the ImageNet dataset) or has now not been educated in any respect.

Within the subsequent level, a number of person units volunteer to coach the type. Those units dangle person information this is related to the type’s utility, equivalent to chat logs and keystrokes.

Those units obtain the bottom type at an acceptable time, for example when they’re on a wireless community and are hooked up to an influence outlet (coaching is a compute-intensive operation and can drain the machine’s battery if achieved at an incorrect time). Then they educate the type at the machine’s native information.

After coaching, they go back the educated type to the server. Fashionable system studying algorithms equivalent to deep neural networks and give a boost to vector machines is that they’re parametric. As soon as educated, they encode the statistical patterns in their information in numerical parameters and so they now not want the educational information for inference. Due to this fact, when the machine sends the educated type again to the server, it doesn’t include uncooked person information.

As soon as the server receives the knowledge from person units, it updates the bottom type with the mixture parameter values of user-trained fashions.

The federated studying cycle should be repeated a number of instances earlier than the type reaches the optimum degree of accuracy that the builders need. As soon as the general type is in a position, it may be disbursed to all customers for on-device inference.

Limits of federated studying

Federated studying does now not practice to all system studying programs. If the type is just too huge to run on person units, then the developer will want to to find different workarounds to maintain person privateness.

However, the builders should ensure that the knowledge on person units are related to the applying. The standard system studying construction cycle comes to extensive information cleansing practices by which information engineers take away deceptive information issues and fill the gaps the place information is lacking. Coaching system studying fashions on inappropriate information can do extra hurt than just right.

When the educational information is at the person’s machine, the knowledge engineers haven’t any means of comparing the knowledge and ensuring it is going to be really useful to the applying. Because of this, federated studying should be restricted to programs the place the person information does now not want preprocessing.

Every other restrict of federated system studying is information labeling. Maximum system studying fashions are supervised, which means that they require coaching examples which can be manually classified by way of human annotators. As an example, the ImageNet dataset is a crowdsourced repository that incorporates tens of millions of pictures and their corresponding categories.

In federated studying, except results may also be inferred from person interactions (e.g., predicting the following phrase the person is typing), the builders can’t be expecting customers to head out in their technique to label coaching information for the system studying type. Federated studying is healthier fitted to unsupervised studying programs equivalent to language modeling.

Privateness implications of federated studying

Whilst sending educated type parameters to the server is much less privacy-sensitive than sending person information, it doesn’t imply that the type parameters are utterly blank of personal information.

Actually, many experiments have proven that educated system studying fashions may memorize person information and club inference assaults can recreate coaching information in some fashions thru trial and blunder.

One essential treatment to the privateness issues of federated studying is to discard the user-trained fashions after they’re built-in into the central type. The cloud server doesn’t want to retailer particular person fashions as soon as it updates its base type.

Every other measure that may lend a hand is to extend the pool of type running shoes. As an example, if a type must be educated at the information of 100 customers, the engineers can build up their pool of running shoes to 250 or 500 customers. For every coaching iteration, the machine will ship the bottom type to 100 random customers from the educational pool. This manner, the machine doesn’t acquire educated parameters from any unmarried person continuously.

In the end, by way of including slightly of noise to the educated parameters and the usage of normalization ways, builders can significantly cut back the type’s talent to memorize customers’ information.

Federated studying is rising in popularity because it addresses one of the basic issues of contemporary synthetic intelligence. Researchers are continuously searching for new tactics to use federated studying to new AI programs and triumph over its limits. It’s going to be fascinating to look how the sector evolves one day.

Ben Dickson is a device engineer and the founding father of TechTalks. He writes about generation, trade, and politics.

This tale at the start gave the impression on Bdtechtalks.com. Copyright 2021

VentureBeat

VentureBeat’s project is to be a virtual the town sq. for technical decision-makers to realize wisdom about transformative generation and transact. Our website online delivers crucial knowledge on information applied sciences and techniques to steer you as you lead your organizations. We invite you to turn into a member of our group, to get entry to:

- up-to-date knowledge at the topics of passion to you

- our newsletters

- gated thought-leader content material and discounted get entry to to our prized occasions, equivalent to Grow to be 2021: Be informed Extra

- networking options, and extra